Reduce LLM Costs by 80%

Start training your SLM in < 7min – no data labelling required.

curl -fsSL https://distillabs.ai/install.sh | sh

Copied!

Talk to us → Leverage Automated Fine-Tuning

Start Training in 30min

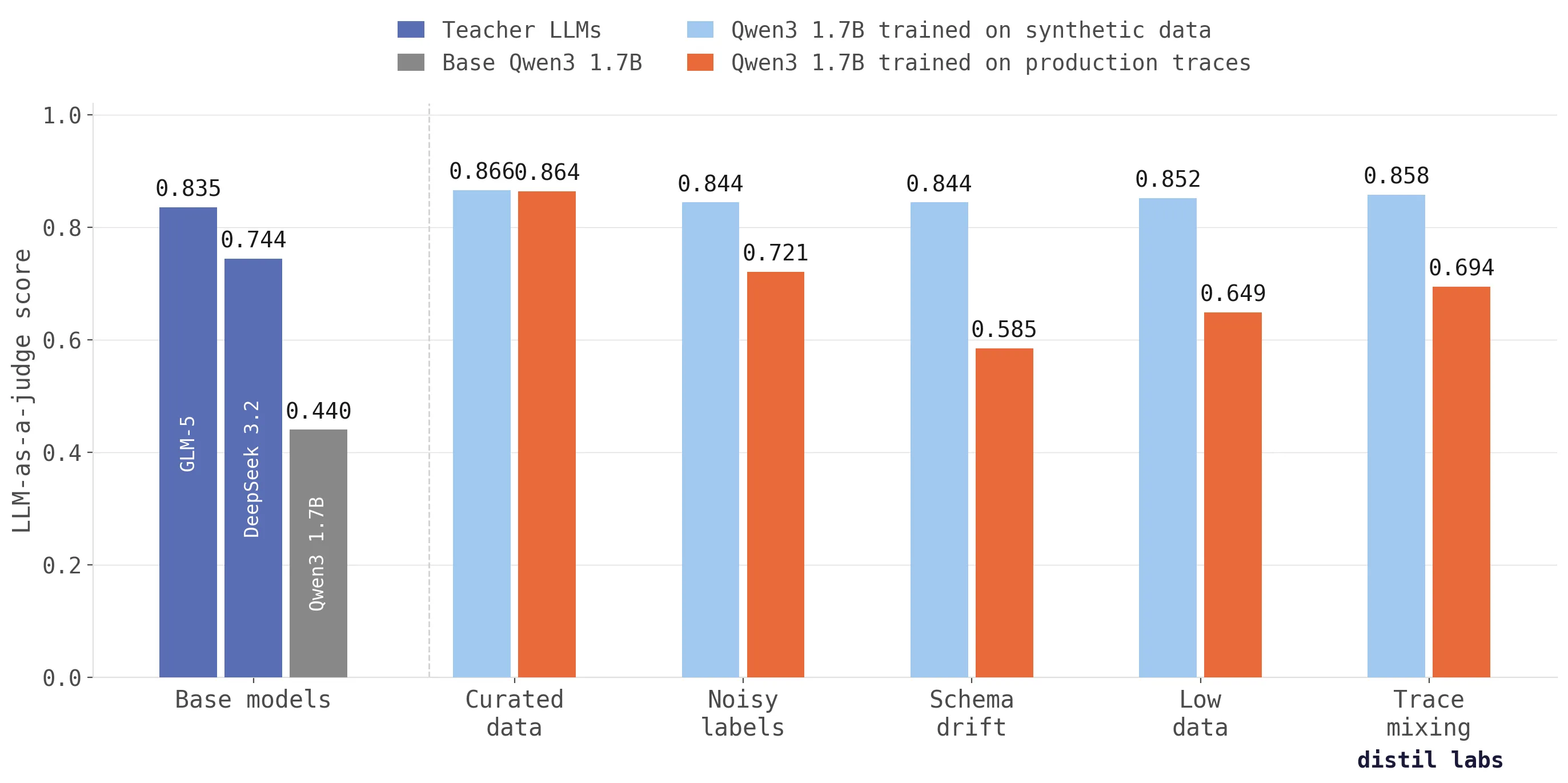

distil labs automatically creates synthetic data from your traces and fine-tunes a model for your task. Problem in, model out – the process is very simple:

- Upload your traces and system prompt

- Train your model

- Deploy with distil labs and integrate

50-90% Lower Inference Costs

Replace expensive LLM API calls with purpose-built small models. The platform handles the heavy lifting, so you can focus on building new features:

- 30-200x smaller models

- Accuracy on par with much larger models

- Latency as low as 50ms for most tasks

30M+ people use distil labs models today

From Problem to Model

$ distil model create my-classifier

ID: $MODEL_ID

Name: my-classifier

Created At: 2026-03-23 15:11:08

$ distil model upload-traces $MODEL_ID --data ./traces

Upload successful. Upload ID: $UPLOAD_ID01

Upload Traces

Create a model and upload 5k - 10k traces of your current agent. Supports various text processing tasks: classification, QA, tool calling, multi-turn tool calling, and more.

$ distil model run-training $MODEL_ID

Kicked off SLM training ID $TRAINING_ID

$ distil model training $MODEL_ID

Training ID: $TRAINING_ID

Status: ◐ Distilling02

Train Model

Start training with a single command. Get feedback on task performance in minutes and model ready in a few hours. Trained SLMs consistently match frontier models 100x larger.

$ distil model deploy remote $MODEL_ID

Training ID: $TRAINING_ID

Deployment ID: efd60b29-...-4f56dbc0b13f

Status: ✓ Active

URL: https://your.deployment.url/$TRAINING_ID/v1

Secrets api_key: QtwT1Ah7Jaf6DPIHBPDMYdNTKT6ujrnn1hZkGtsb21U

$ distil model invoke $MODEL_ID

╭────────────────────────────────────────────────────────────────────────────────────────────────────╮

│ ℹ Run uv run .../$TRAINING_ID/remote_client.py --question "Your question here" to invoke the model │

╰────────────────────────────────────────────────────────────────────────────────────────────────────╯03

Deploy & Invoke

Deploy your trained model to a hosted endpoint with one command, then invoke it immediately. No infrastructure to set up — just deploy and call.

from openai import OpenAI

# Just change the base URL — everything else stays the same

client = OpenAI(

base_url="https://your.deployment.url/$TRAINING_ID/v1",

api_key="your-distil-api-key",

)

response = client.chat.completions.create(

model="model",

messages=[{"role": "user", "content": "Classify: I want to return my order"}],

)

print(response.choices[0].message.content)

# → {"label": "return_request", "confidence": 0.97}04

Integrate

Swap one URL in your existing code — that’s it. The distil labs endpoint is OpenAI-compatible, so any SDK or client that talks to OpenAI works out of the box.

What Our Customers Say

We needed a small model that could power our product on an IBM P11, entirely on-premises. distil labs’ fine-tuned models allowed us to ship a self-contained solution where the SLM and our graph platform coexist on the same hardware. For customers in regulated industries, this means AI-powered query generation with complete data privacy – nothing ever leaves their environment.

David J. Haglin

Co-Founder and CTO at Rocketgraph

Using distil labs, we were able to spin up highly accurate custom small models tailored to our workflows in no time. Those models cut our inference costs by roughly 50% without sacrificing quality. The distil labs team was incredibly supportive as we got started and helped us get to production smoothly.

Lucas Hild

Co-Founder & CTO at Knowunity

The distil labs platform accelerated the release of our cybersecurity-specialized language model, KINDI, enabling faster iterations with greater confidence. As a result, we ship InovaGuard improvements sooner and continuously boost investigation accuracy with every release.

Samir Bennacer

Co-Founder and CTO at Octodet

The Team

Backed by

Demos & Blog

The 10x Inference Tax You Don't Have to Pay

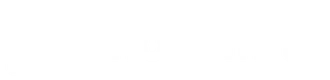

Benchmarking fine-tuned small language models (0.6B-8B) against 10 frontier LLMs across 8 datasets shows that task-specific SLMs match or beat frontier models at 10-100x lower inference cost.

Read more →

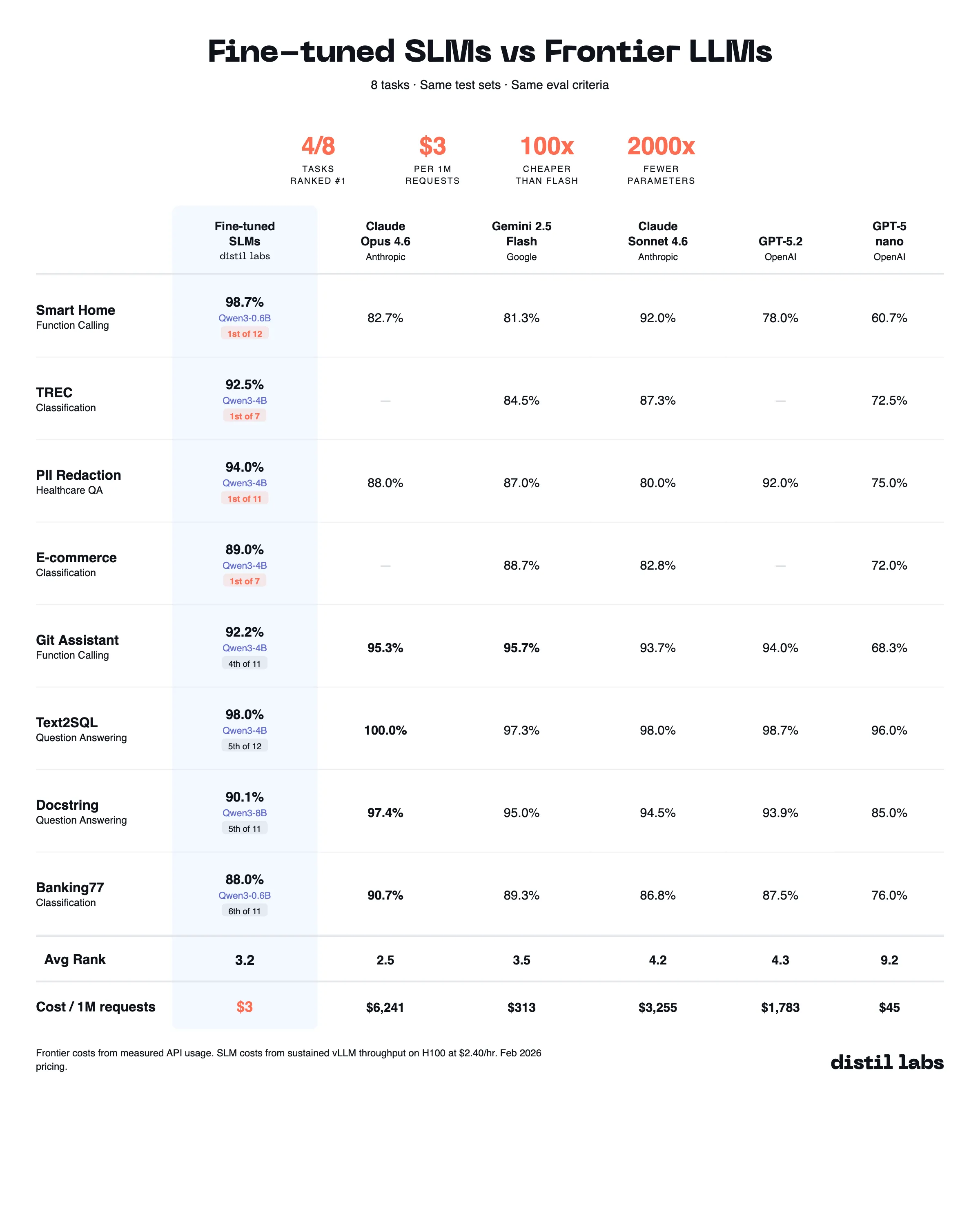

Why training on production traces fails (and what to do instead)

Training directly on production traces doesn't work as well as you'd expect. We tested across five scenarios and synthetic data from traces scores up to 26 percentage points higher in accuracy.

Read more →

Train an SLM from your production traces with the distil labs Claude skill

A walkthrough of using the distil labs Claude skill to turn 327 noisy production traces into a fine-tuned Qwen3-1.7B multi-turn tool-calling model, deployed on a managed endpoint in a single conversation.

Read more →