Customers

Real-world production deployments of distilled small language models.

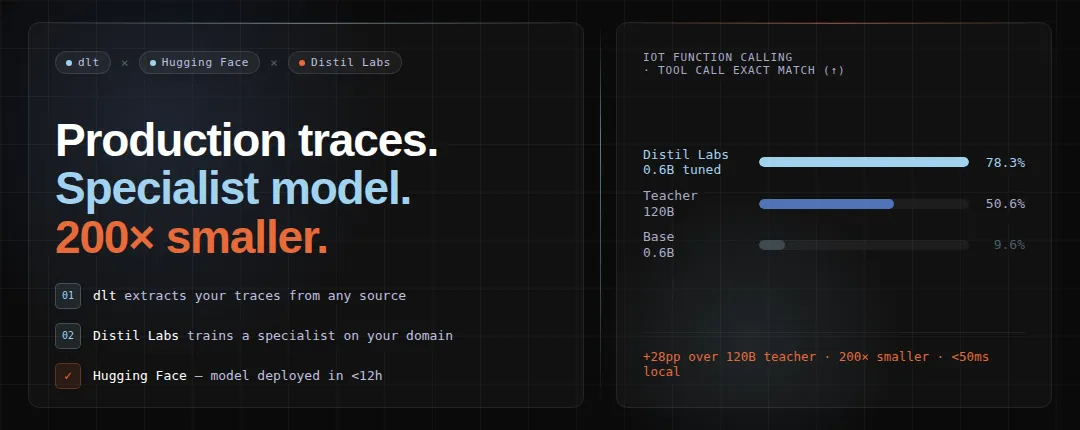

A 0.6B model outperformed a 120B LLM by 29 points - using dlt, distil labs, and Hugging Face

How to turn production LLM traces into a deployed specialist model using dlt for trace extraction and distil labs for training, achieving 79% exact match with a 0.6B model that beats a 120B teacher by 29 points.

How Knowunity used distil labs to cut their LLM bill by 68%

Knowunity, an edtech startup processing hundreds of millions of AI requests monthly, used distil labs to train a custom small language model that cut inference costs by 68% while improving classification accuracy from 81% to 93%.

Helping Rocketgraph's customers with an OpenCypher-specialized small language model

How distil labs partnered with Rocketgraph to finetune a small language model specialized in translating user questions to Rocketgraph-compliant Cypher queries on IBM Power hardware.

Teaching Small Language Models New Skills - Training a Local Cybersecurity Agent

How distil labs partnered with Octodet to train a small language model that outperforms LLMs 30x its size at analyzing cybersecurity logs, while running entirely on-premises to meet strict privacy requirements.