Blog & Demos

Tutorials, case studies, benchmarks, and open-source demos — everything you need to build with small language models.

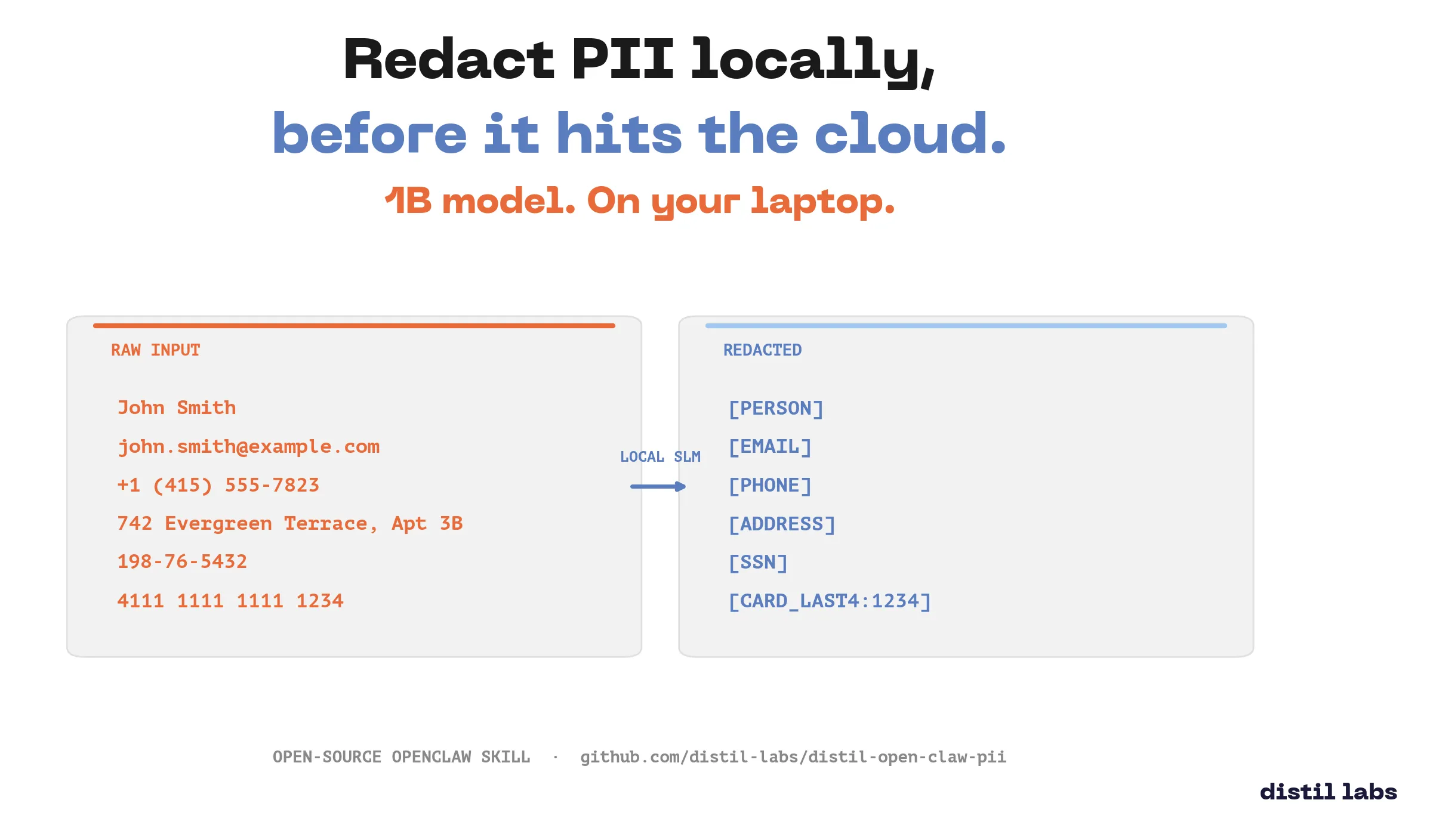

Distil PII Redactor: an OpenClaw Skill

Locally redact PII from text using a fine-tuned 1B parameter model packaged as an OpenClaw skill. Your sensitive data never leaves your machine.

Autonomous Bug Fixing Agent with distil labs' SLM and Warp Oz

A self-healing loop that diagnoses production failures with a fine-tuned 0.6B SLM and applies the fix with Warp Oz — closing incidents in seconds, no humans paged.

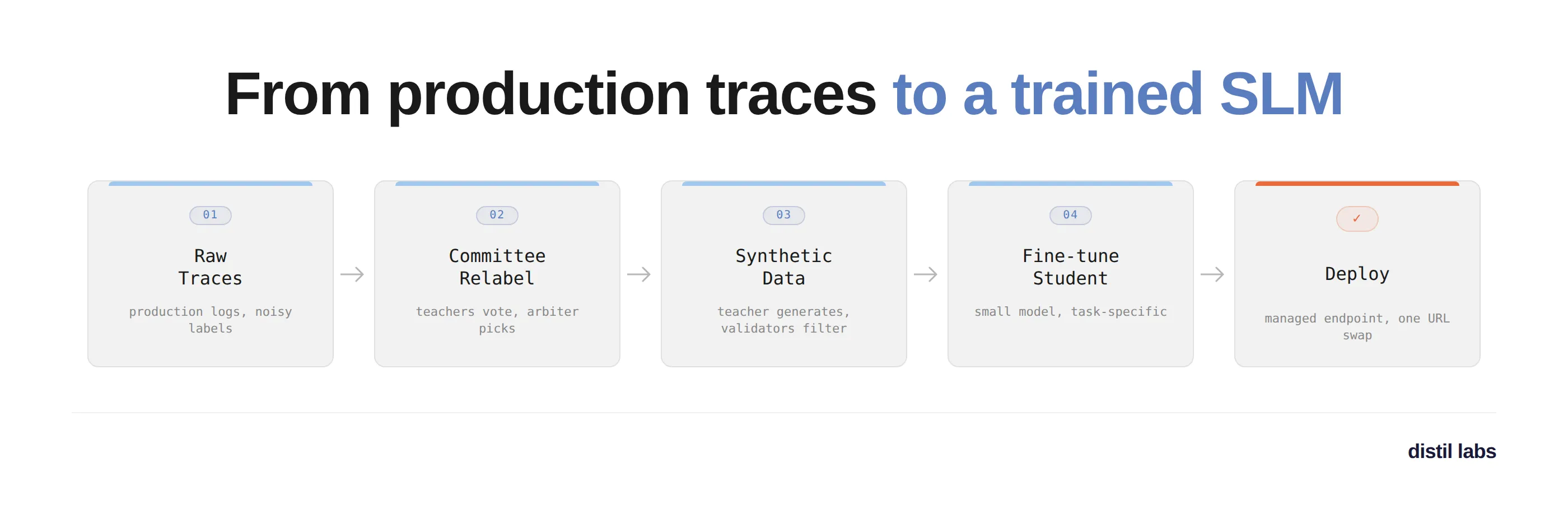

Train an SLM from your production traces with the distil labs Claude skill

A walkthrough of using the distil labs Claude skill to turn 327 noisy production traces into a fine-tuned Qwen3-1.7B multi-turn tool-calling model, deployed on a managed endpoint in a single conversation.

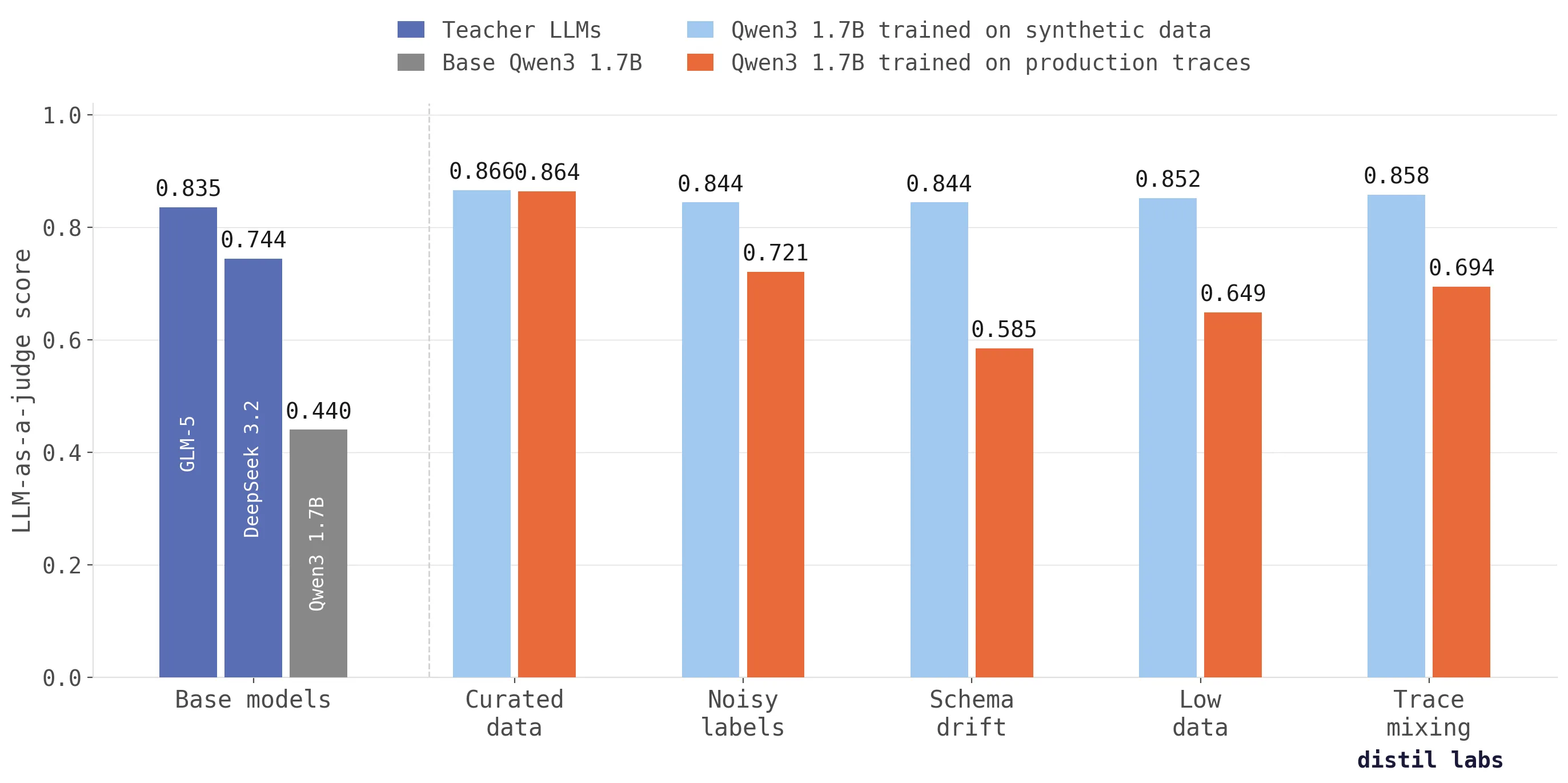

Why training on production traces fails (and what to do instead)

Training directly on production traces doesn't work as well as you'd expect. We tested across five scenarios and synthetic data from traces scores up to 26 percentage points higher in accuracy.

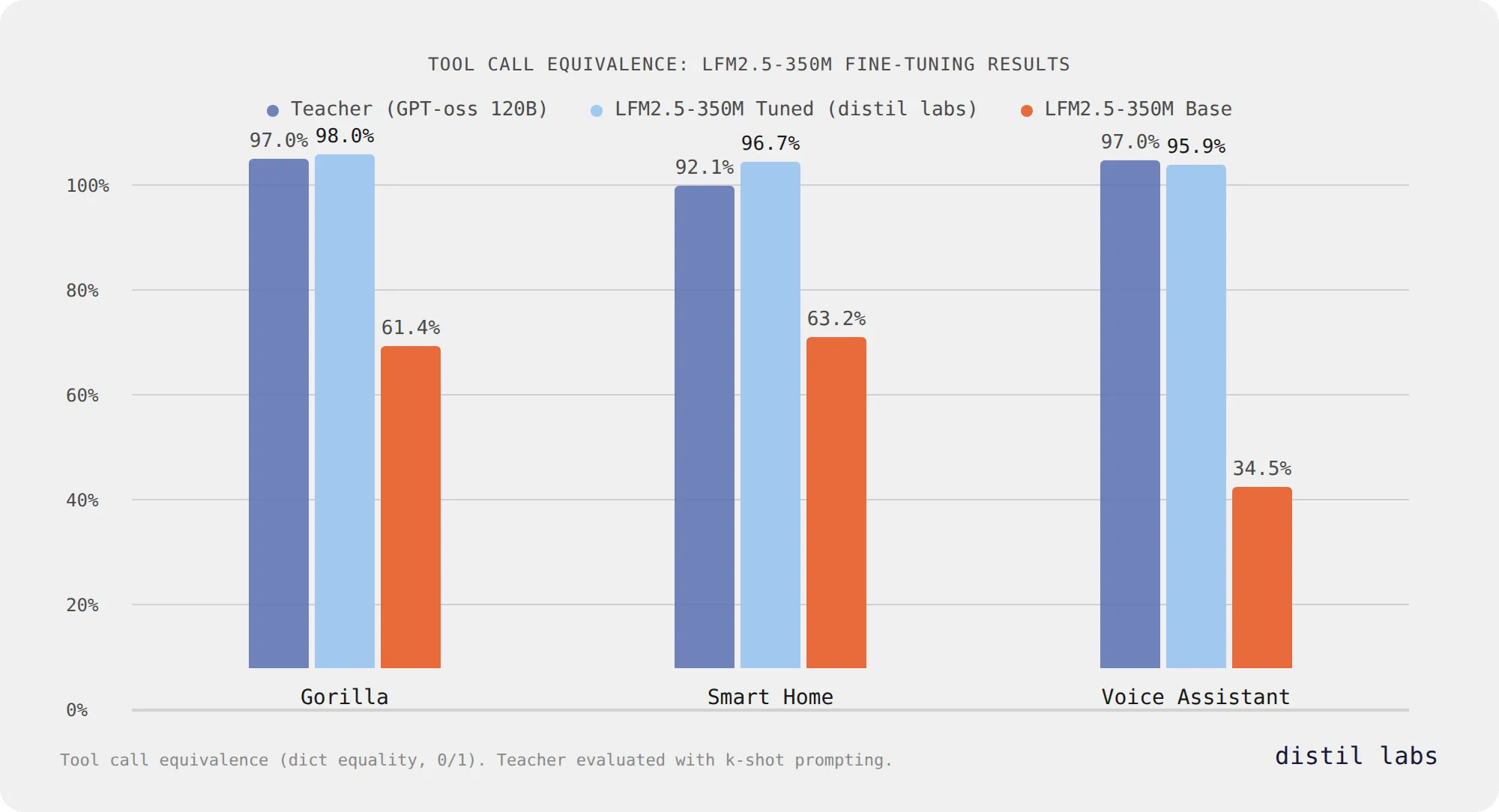

Fine-Tuning Liquid's LFM2.5: Accurate Tool Calling at 350M Parameters

Liquid AI's LFM2.5-350M reaches 96-98% tool call equivalence after fine-tuning with distil labs across three benchmarks, matching or exceeding a 120B teacher model while staying at 350M parameters.

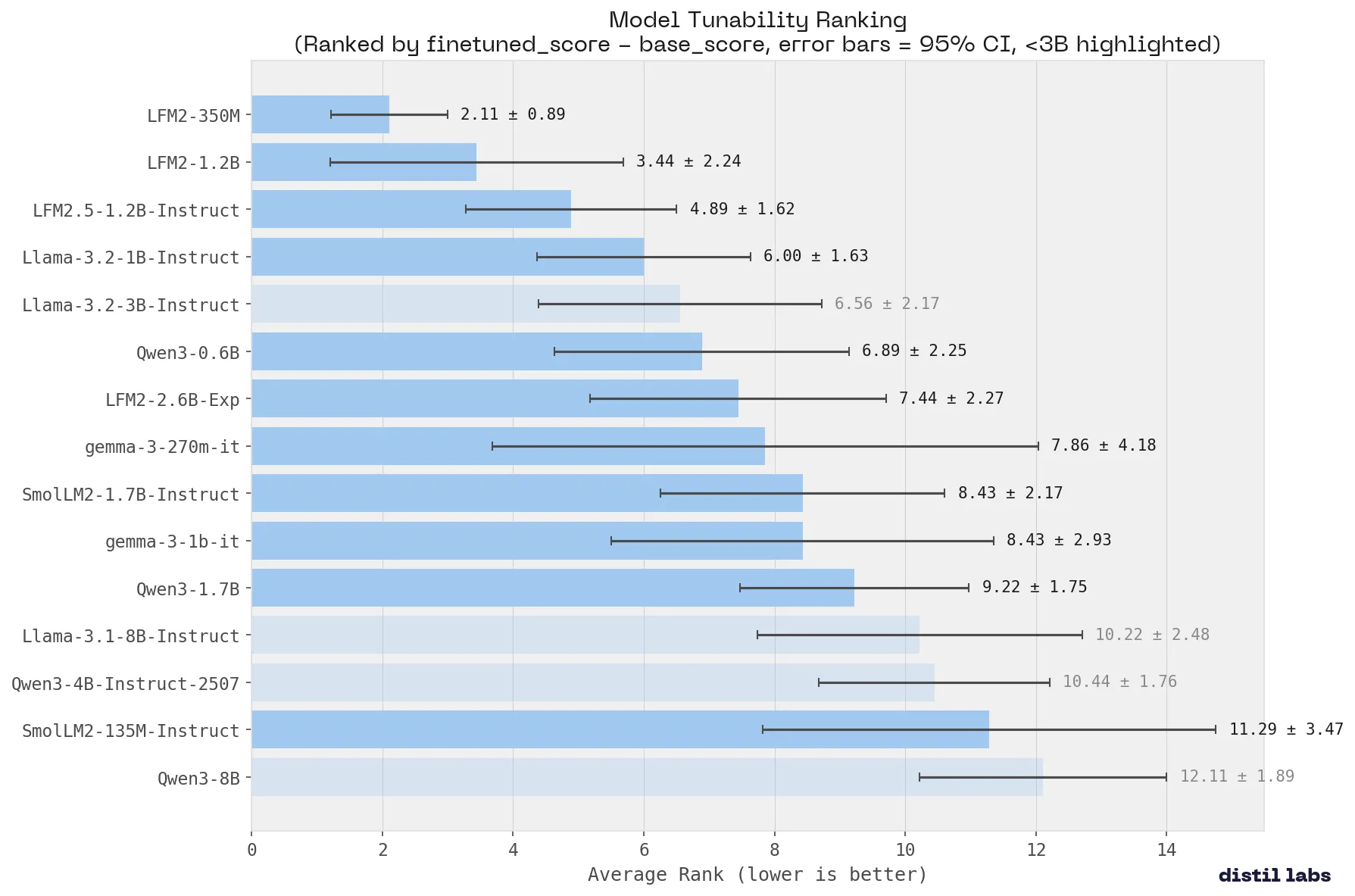

What Small Language Model Is Best for Fine-Tuning

We benchmarked 15 small language models across 9 tasks to find the best base model for fine-tuning. Qwen3-8B ranks #1 overall. Liquid AI's LFM2 family is the most tunable. Fine-tuned Qwen3-4B matches a 120B+ teacher on 8 of 9 benchmarks.

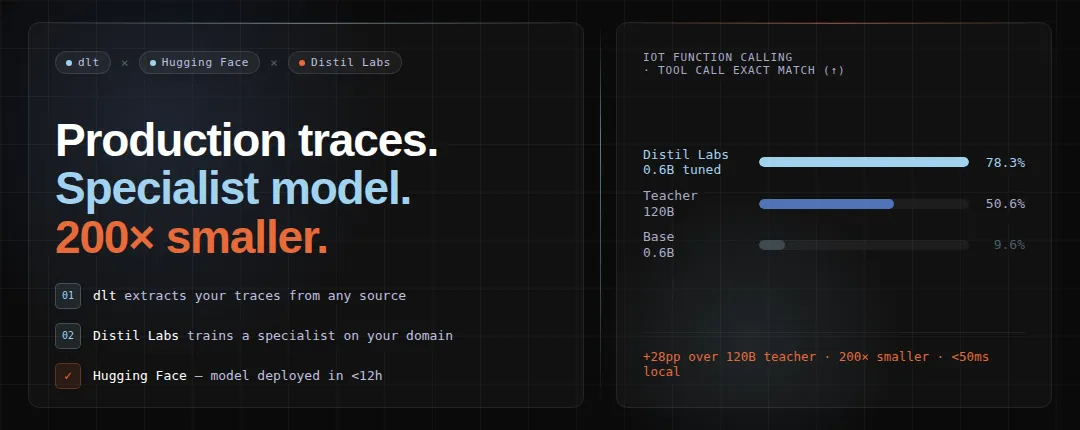

A 0.6B model outperformed a 120B LLM by 29 points - using dlt, distil labs, and Hugging Face

How to turn production LLM traces into a deployed specialist model using dlt for trace extraction and distil labs for training, achieving 79% exact match with a 0.6B model that beats a 120B teacher by 29 points.

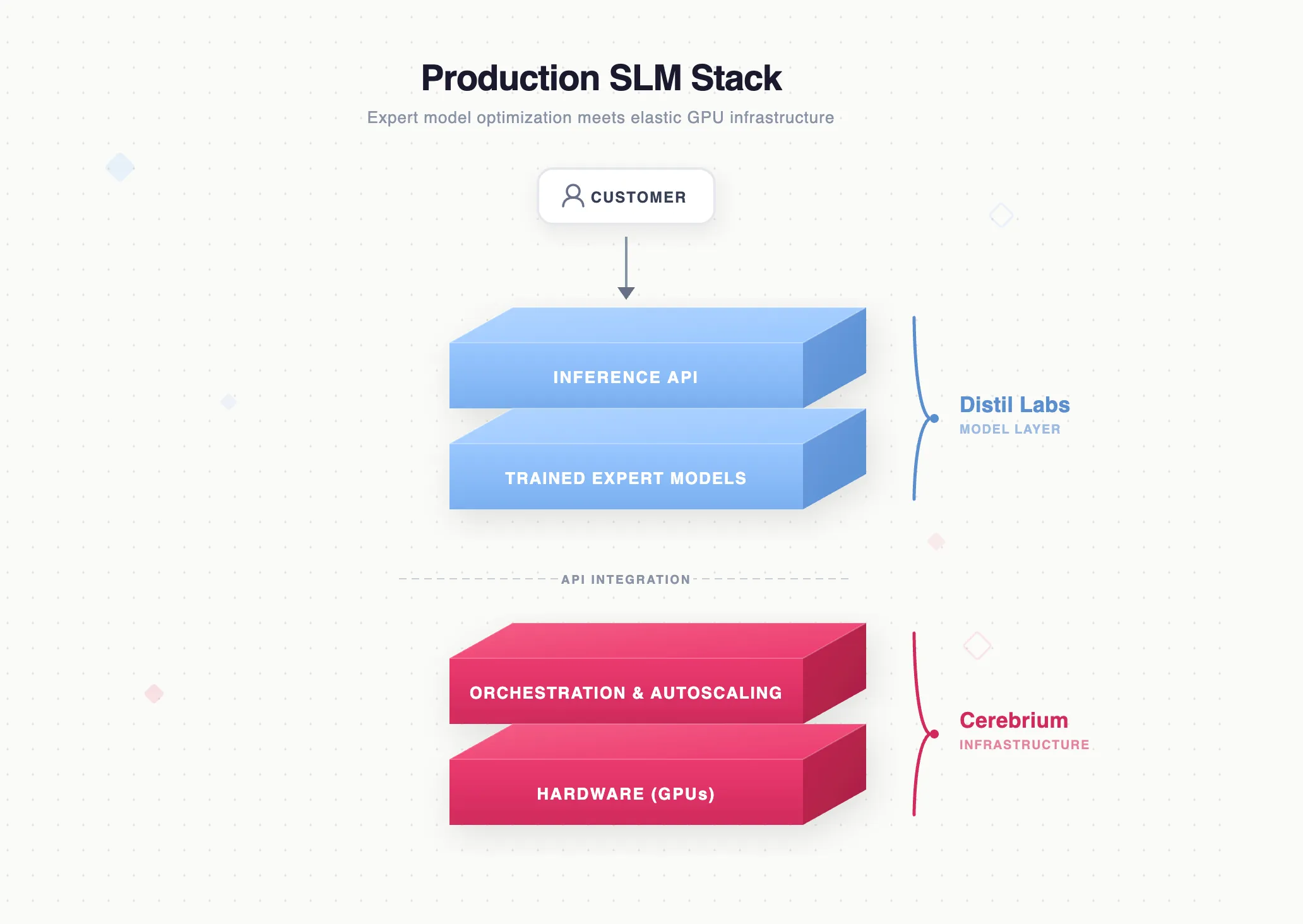

Full-Stack Production Language Models: Expert Model Optimization Meets Scalable GPU Infrastructure

How distil labs and Cerebrium combine expert model optimization with serverless GPU infrastructure to deliver an end-to-end stack for replacing expensive LLM inference with lean, production-grade small-model deployments.

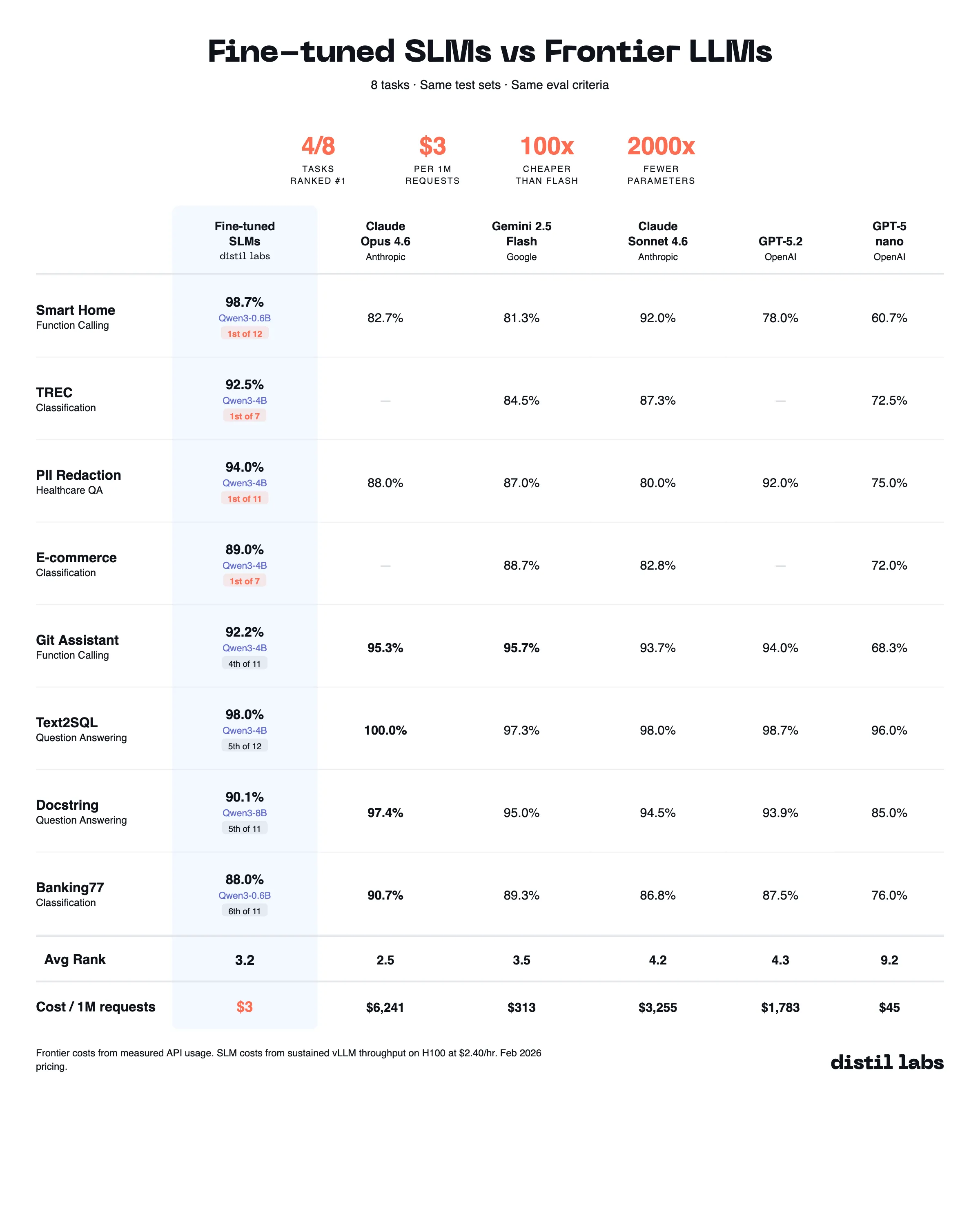

The 10x Inference Tax You Don't Have to Pay

Benchmarking fine-tuned small language models (0.6B-8B) against 10 frontier LLMs across 8 datasets shows that task-specific SLMs match or beat frontier models at 10-100x lower inference cost.