Blog & Demos

Tutorials, case studies, benchmarks, and open-source demos — everything you need to build with small language models.

Resume Roaster AI: Brutally Honest Resume Critique with a Local SLM

A fine-tuned Llama-3.2-3B model that generates sarcastic resume critiques and professional improvement suggestions. Runs entirely locally to keep your personal data private.

distil-commit-bot: AI-Powered Commit Messages for TypeScript

A fine-tuned 0.6B parameter SLM that generates commit messages for TypeScript codebases. Runs locally via Ollama, achieving 90% accuracy compared to a 120B teacher model — at 200x smaller size.

distil-localdoc.py: Automatic Python Documentation Generation

A fine-tuned Qwen3 0.6B model that generates complete, properly formatted Google-style docstrings for your Python code — runs locally to keep your proprietary code secure.

distil-expenses: Local Personal Expense Summaries with SLMs

Fine-tuned Llama 3.2 models (1B and 3B) for personal expense analysis. Runs locally via Ollama — query your spending data with natural language while keeping your financial data completely private.

distil NPC: Small Language Models for Video Game Characters

A family of small language models specialised for conversational NPCs in video games. Enables natural language interaction with game characters running entirely on-device, no network required.

distil-PII: Family of PII Redaction SLMs

We trained and released a family of small language models specialized for policy-aware PII redaction that dramatically outperform their pre-trained counterparts.

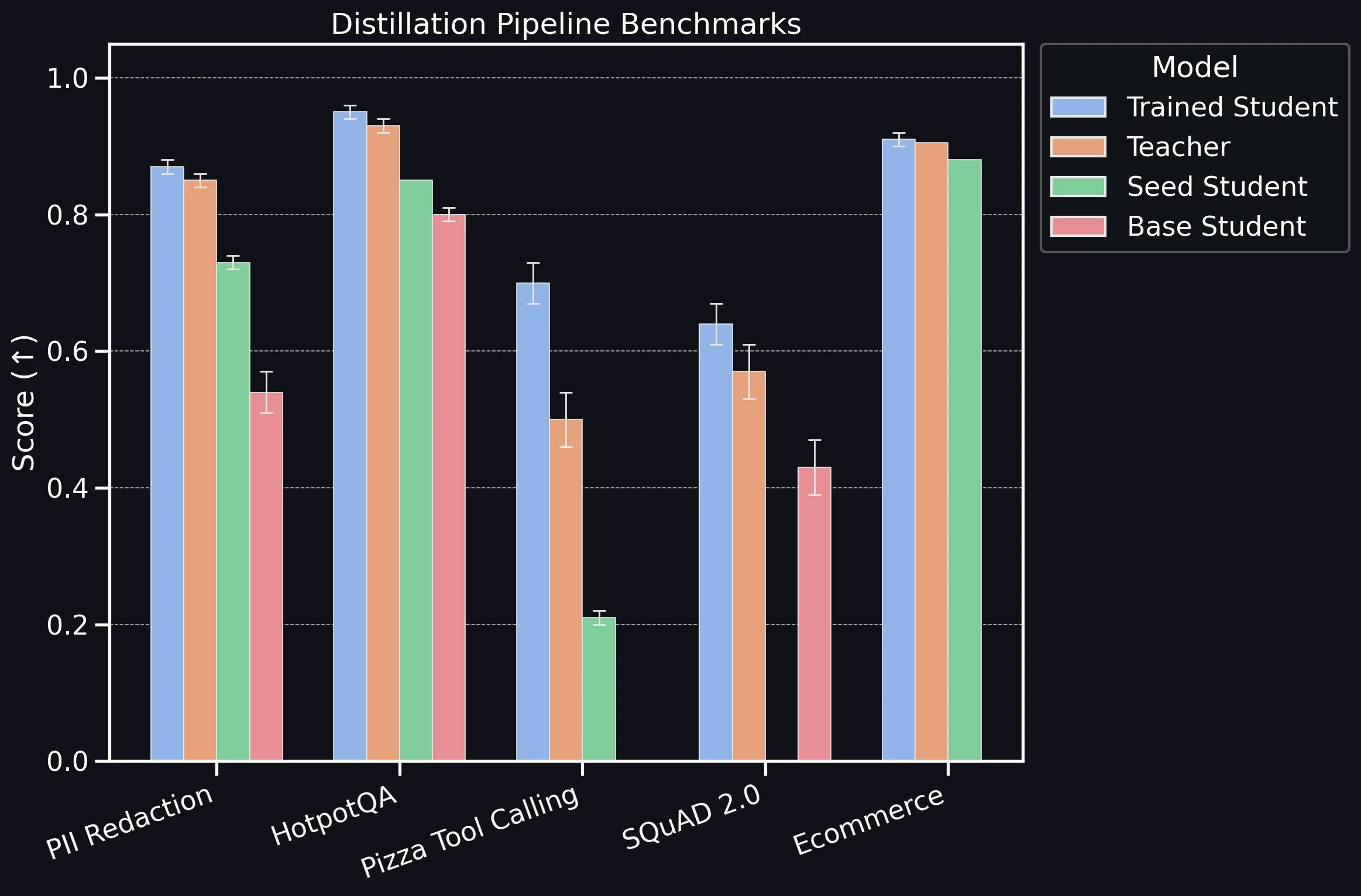

distil labs: Benchmarking the Platform

Benchmarking distil labs' distillation pipeline across classification, information extraction, QA, and tool-calling tasks, showing that compact SLMs consistently match or exceed teacher LLMs.

distil labs: Small Models, Big Wins – Using SLMs in Agentic AI

How small language models can match or beat much larger LLMs when fine-tuned to well-scoped tasks, enabling faster, cheaper, and more private agentic AI workflows.

distil labs: Small Expert Agents from 10 Examples

An overview of how distil labs turns a prompt and a few dozen examples into a small, accurate expert agent that matches LLM-level results with models 50-400x smaller.